AI-Based Image Reconstruction Techniques Could Lead to Misdiagnosis

Algorithms can create errors in multiple imaging systems, according to new tests.

Artificial intelligence (AI) and machine learning might not be as reliable in medical imaging as previously hoped. A new study suggests these tools are “highly unstable” in medical image reconstruction.

In a study published on May 11 in the

Not only did the techniques result in unwanted alterations to the data, by they also produced significant errors in the final images, the investigators said. Consequently, they warned, relying solely on an AI-based image reconstruction technique to make a diagnosis or to determine a treatment path could lead to unintentional harm to the patient.

“There’s been a lot of enthusiasm about AI in medical imaging, and it may well have the potential to revolutionize modern medicine: however, there are potential pitfalls that must not be ignored,” said Anders Hansen, Ph.D., from Cambridge’s Department of Applied Mathematics and Theoretical Physics. “We’ve found that AI techniques are highly unstable in medical imaging, so that small changes in the input may result in big changes in the output.”

Hansen led the study with Ben Adcock, Ph.D., from Simon Fraser University.

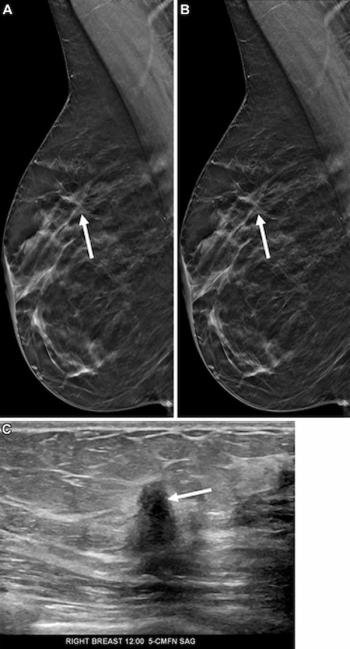

To test the reliability of these algorithms, Hansen and his colleagues from Norway, Portugal, Canada, and the United Kingdom created a series of tests designed to identify flaws in AI-based medical imaging systems, including MRI, CT, and nuclear magnetic resonance. They focused on three areas: instabilities associated with tiny movements, instabilities with respect to small structural changes, such as a brain image with or without a small tumor, and instabilities associated with changes in the number of samples.

According to their results, tiny movements led to myriad artefacts in final images. In addition, details were blurred or completely removed, and the quality of image reconstruction deteriorated with repeated subsampling. The errors occurred frequently across the different types of neural networks, they said.

“When it comes to critical decisions around human health, we can’t afford to have algorithms making mistakes,” Hansen said. “We found that the tiniest corruption, such as may be caused by a patient moving, can give a very different result if you’re using AI and deep learning to reconstruct medical imaging – meaning that these algorithms lack the stability they need.”

These findings are particularly concerning, they said, because the errors could potentially lead a radiologist to erroneously diagnose a medical issue.

“We developed the test to verify our thesis that deep learning techniques would be universally unstable in medical imaging,” he said. “The reasoning for our prediction was that there is a limit to how good a reconstruction can be given restricted scan time. In some sense, modern AI techniques break this barrier, and as a result become unstable. We’ve shown mathematically that there is a price to pay for these instabilities, or to put it simply: there is still no such thing as a free lunch.”