Deep Learning AI Improves Breast Lesion Classification on Ultrasound

Algorithms outperformed providers in accurate, quick detection and classification.

Using a deep learning algorithm may offer more accuracy in classifying breast masses visualized on ultrasound images than radiologists can provide.

In a new study, published in the

“Artificial intelligence is good at identifying complex patterns in images and quantifying information that humans have difficulty detecting, thereby complementing clinical decision making,” said Wen He from Beijing Tian Tan Hospital with Capital Medical University in China.

While it is known that artificial intelligence (AI) tools can tell the difference between benign and malignant tumors, He’s team took things a step further. They assessed whether it could help categorize masses into four breast mass classifications typically used in China -- benign, malignant, and inflammatory masses, as well as adenosis.

To make this determination, He collaborated with scientists from 13 hospitals to train convolutional neural networks (CNNs) to classify breast ultrasound images. They examined 15,648 images gathered from 3,623 patients, using half to train and half to test three different CNN models.

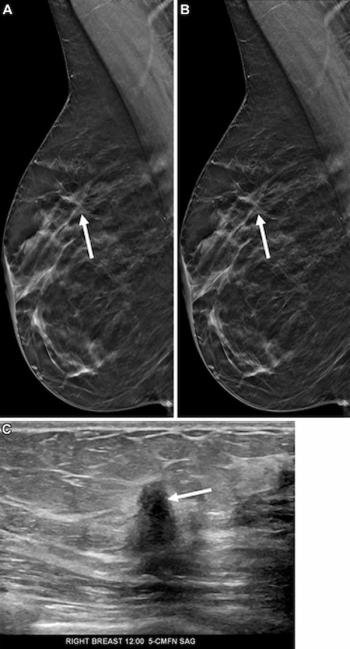

The first model relied only on 2D ultrasound intensity images for input, the second tacked on color flow Doppler images that offered details about blood flow around breast lesions, and the third also included pulsed wave Doppler images for spectral information about specific areas within the lesions.

For each CNN, the team tested two modules – a detection module that determined the position and size of breast lesions in original 2D ultrasound images and a classification module that received only extracted image portions that contained detected lesions. They also included an output layer with four categories that aligned with the four breast mass classifications.

According to their results, the three models had similar accuracy levels – around 88 percent – although the model that included both 2D images and color flow Doppler data performed slightly better than the other two (89.2 percent versus 87.9 percent and 88.7 percent). In addition, they said, mass size did not appear to affect accuracy in malignancy detection while it did play a role with benign tumors.

When compared to the performance of 37 experienced ultrasonologists who read 50 randomly selected images, the CNN models performed significantly better, He said.

“The accuracy of the CNN model was 89.2 percent, with a processing time of less than two seconds,” he explained. “In contrast, the average accuracy of the ultrasonologists was 30 percent, with an average time of 314 seconds.”

Investigators tested their algorithms on ultrasound equipment from multiple vendors, highlighting not only the usefulness of the deep learning algorithms in supporting providers in breast lesions detection, but also in showcasing its applicability in a variety of hospital settings, the team said.

The ultimate goal, He said, is to incorporate AI into ultrasound diagnostic procedures to potentially accelerate earlier cancer detection.

“Because CNN models do not require any type of special equipment, their diagnostic recommendations could reduce predetermined biopsies, simplify the workflow of ultrasonologists, and enable targeted and refined treatment,” he said.

For more coverage based on industry expert insights and research, subscribe to the Diagnostic Imaging e-Newsletter