Image-Based Machine Learning Models for COVID-19 Diagnosis Not Suitable for Clinical Use

Study finds hundreds of COVID-19 machine learning models are riddled with flaws, making them unreliable.

Imaging-based machine learning models publicized as tools for diagnosing COVID-19 cannot produce the desired results and are not suitable for use, according to industry experts.

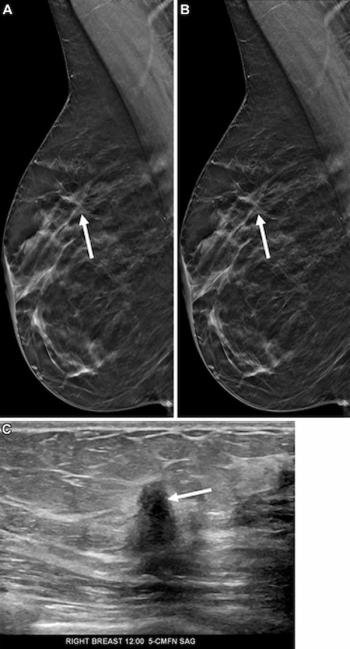

To date, more than 300 COVID-19 machine learning models, built with chest X-rays and chest CTs, have been developed and discussed in scientific literature since the beginning of the pandemic. But, due to methodological short-comings, biases, and bad data, they’re of no real use clinically, said researchers from the University of Cambridge. The team published their findings March 15 in

“Our review finds that none of the models identified are of potential clinical use due to methodological flaws and/or underlying biases,” said the team led by first author Michael Roberts, Ph.D., from the Cambridge department of applied mathematics and theoretical physics. “This is a major weakness, given the urgency with which validated COVID-19 models are needed.”

From the beginning of the pandemic, there was an overwhelming desire for tools that could help providers detect and diagnose the virus as quickly as possible. But, because machine learning algorithms require high-quality data, the rapidly evolving landscape and different presentations of the disease and how it behaves made it a challenge to create reliable models.

“The international machine learning community went to enormous efforts to tackle the COVID-19 pandemic using machine learning,” said joint senior author James Rudd, MB, BCh, Ph.D., from the Cambridge department of medicine. “These early studies show promise, but they suffer from a high prevalence of deficiencies in methodology and reporting, with none of the literature we reviewed reaching the threshold of robustness and reproducibility essential to support use in clinical practice.”

For their study, the team identified 2,212 studies and whittled them down to 62 for a systematic review. Their analysis showed that no study was acceptable with each one having critical flaws, such as poor quality data, poor applications of machine learning methodology, poor reproducibility, or study design bias. For example, many training data sets that used images of adults for their COVID-19 data actually relied on pediatric images for non-COVID-19 information.

“However, since children are far less likely to get COVID-19 than adults,” Roberts explained, “all the machine learning model could usefully do was to tell the difference between children and adults, since including images from children made the model highly biased.”

In many cases, studies did not specify the origin of their data or the same data was used to both test and train models. Other studies used publicly available “Frankenstein datasets” that had evolved and merged over time and were no longer able to provide reproducible results.

In addition, the team noted, many machine models were trained on datasets from single institutions – far too little information, and far less varied, to reliably be useful in a different facility or geographic location.

“The data needs to be diverse and ideally international, or else you’re setting your machine learning model up to fail when it’s tested more widely,” Rudd said.

But, it is possible to salvage machine learning for COVID-19 and make it useful and effective as the pandemic lingers, the team said. To develop more effective models, they offered these suggestions:

- Avoid using public datasets as they can lead to significant bias risks

- Use appropriately sized, diverse datasets to ensure models are useful across different demographic groups

- Curate independent external datasets

- Provide sufficient documentation in manuscripts to ensure results are reproducible and to increase the likelihood models will be integrated into future clinical trials that will establish independent technical and clinical validation, as well as cost-effectiveness

For more coverage based on industry expert insights and research, subscribe to the Diagnostic Imaging e-Newsletter