Study Raises Doubt About AI Sensitivity for Smaller and Multiple Findings on Chest X-Rays

In a multicenter study examining four commercially available artificial intelligence (AI) software products for chest X-rays in over 2,000 patients, researchers found sensitivity rates ranging between 33 to 61 percent for vague airspace disease and 9 to 94 percent for small pneumothorax and pleural effusion.

Current artificial intelligence (AI) software for chest X-rays may have significant limitations with researchers noting an increased number of false positives in comparison to radiologist assessment as well as significantly decreased sensitivity for small pneumothorax, pleural effusion, and vague airspace disease.

For the study, recently published in Radiology, researchers assessed the impact of four commercially available AI software modalities in detecting findings on chest X-rays from 2,040 patients (median age of 72). The modalities included: Annalise Enterprise CXR version 2.2 (Annalise.ai); SmartUrgencies version 1.24 (Milvue); ChestEye version 2.6 (Oxipit); and AI-Rad Companion version 10 (Siemens Healthineers).

For airspace disease, the researchers found that the AI tools had overall sensitivity rates ranging between 72 and 91 percent with an area under the receiver operating characteristic curve (AUC) ranging between 83 and 88 percent. They also noted overall AUCs ranging between 89 and 97 percent for pneumothorax and ranging between 94 and 97 percent for pleural effusion. For normal and single findings, the AI tools demonstrated specificity rates ranging between 85 to 96 percent for airspace disease, 99 to 100 percent for pneumothorax and 95 to 100 percent for pleural effusion, according to the study.

However, the study authors found that all of the AI tools had a higher false-positive rate for airspace disease (ranging between 13.7 to 36.9 percent) in comparison to radiologist assessment (11.6 percent). For pleural effusion, researchers also noted that three of the AI software modalities had higher false positive rates (ranging between 7.7 to 16.4 percent) in contrast to radiologist reports (4.2 percent).

The research findings also revealed lower specificity rates of the AI tools when there were four or more findings on chest X-ray with ranges of 27 to 69 percent for airspace disease, 96 to 99 percent for pneumothorax and 65 to 92 percent for pleural effusion. While the AI tools had sensitivity rates ranging between 81 and 100 percent for larger findings on chest X-rays, the study authors noted lower sensitivity for vague airspace disease (33 to 61 percent) and small pneumothorax and pleural effusion (9 to 94 percent).

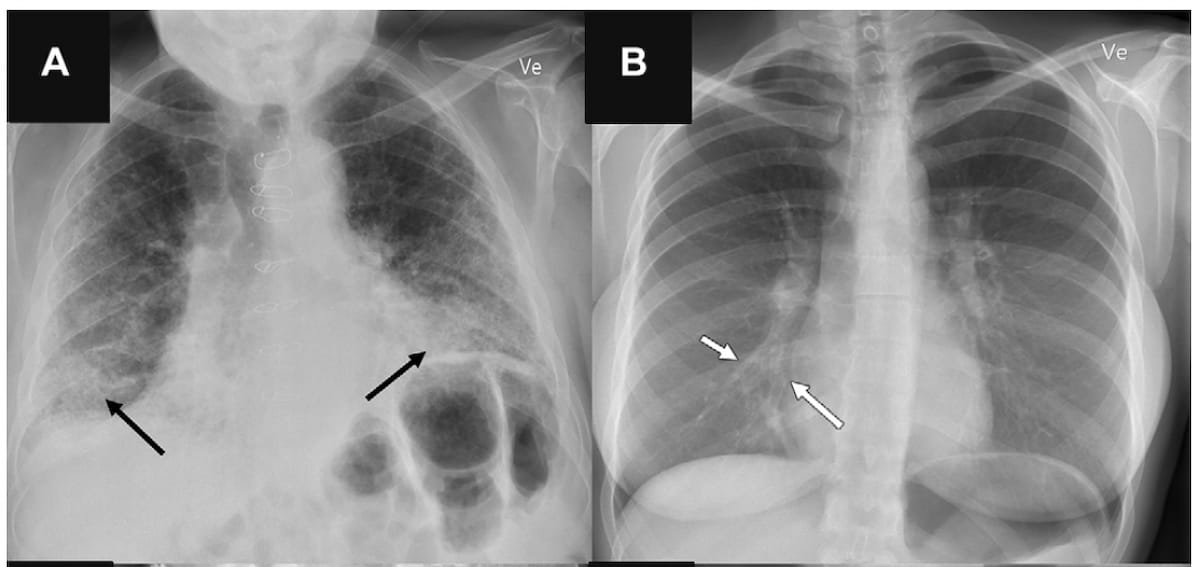

Here one can see an example of false-positive findings (A) and false-negative findings (B) identified by artificial intelligence (AI) software. The study authors noted that all of the AI tools in the study had higher false-positive rates for airspace disease and three of the four AI software modalities had higher false-positive rates for pleural effusion in comparison to radiologist assessment. (Images courtesy of Radiology.)

“The AI tools achieved moderate to high sensitivities ranging 62%–95% and excellent negative predictive values greater than 92%. The positive predictive values of AI tools were lower and showed more variation, ranging 37%–86%, most often with false-positive rates higher than the clinical radiology reports,” wrote study co-author Michael Brun Andersen, M.D., Ph.D., who is affiliated with the Department of Radiology at Herlev and Gentofte Hospital in Copenhagen, Denmark.

“Furthermore, we found that AI sensitivity generally was lower for smaller-sized target findings and that AI specificity generally was lower for anteroposterior chest radiographs and those with concurrent findings.”

Given the ranges the researchers saw with sensitivity and specificity rates with the AI software examined in the study, they emphasized appropriate threshold adjustment when implanting these tools in practice.

“ … When implementing an AI tool, it seems crucial to understand the disease prevalence and severity of the site and that changing the AI tool threshold after implementation may be needed for the system to have the desired diagnostic ability,” added Andersen and colleagues. “Furthermore, the low sensitivity observed for several AI tools in our study suggests that, like clinical radiologists, the performance of AI tools decreases for more subtle findings on chest radiographs.”

(Editor’s note: For related content, see “FDA Clears AI-Powered Software for Lung Nodule Detection on X-Rays,” “Annalise.ai Obtains Seven New AI FDA 510(k) Clearances for Head CT and Chest X-Ray” and “Autonomous AI Shows Nearly 27 Percent Higher Sensitivity than Radiology Reports for Abnormal Chest X-Rays.”)

In regard to study limitations, the authors noted the retrospective nature of a study that evaluated the standalone performance of the four AI models that were clinically approved for adjunctive reading with radiologist assessment. The radiologists in the study had access to lateral chest X-rays, clinical information, and prior imaging but the AI modalities did not have access to that data, according to the study authors. The researchers also suggested that the high prevalence of multiple chest X-ray findings and a high median patient age of the cohort may limit general extrapolation of the study findings beyond hospital settings.

Newsletter

Stay at the forefront of radiology with the Diagnostic Imaging newsletter, delivering the latest news, clinical insights, and imaging advancements for today’s radiologists.

Stroke MRI Study Assesses Impact of Motion Artifacts Upon AI and Radiologist Lesion Detection

July 16th 2025Noting a 7.4 percent incidence of motion artifacts on brain MRI scans for suspected stroke patients, the authors of a new study found that motion artifacts can reduce radiologist and AI accuracy for detecting hemorrhagic lesions.