An emerging multimodal transformer-based artificial intelligence (AI) model, which integrates clinical data as well as findings from chest X-rays, reportedly offers significantly improved area under the receiver operating characteristic curves (AUCs) for over 20 conditions including acute cerebrovascular disease, conduction disorders and pneumonia.

For the retrospective study, recently published in Radiology, researchers noted that clinical data and chest X-ray findings from two large patient databases and a total of over 62,000 intensive care unit (ICU) patients were utilized to train the transformer-based AI model. The study authors subsequently compared the multimodal AI model to the use of clinical data alone and chest X-ray findings alone for a variety of conditions.

Overall, the researchers found that the multimodal AI model had a mean 77 percent AUC for the diagnosis of 25 conditions in comparison to 70 percent for chest X-ray findings only and 72 percent for clinical parameters only.

Specifically, the multimodal AI model had a 21 percent higher AUC (91 percent) than chest radiographs only (70 percent) for diagnosing acute cerebrovascular disease, according to the study. For hypertension with complications and secondary hypertension, the study authors noted a 77 percent AUC for multimodal AI in contrast to 71 percent for X-ray findings only and 68 percent based on clinical parameters only.

For congestive heart failure (non-hyperintensive), multimodal AI had an 11 percent higher AUC (82 percent) than clinical parameters alone (71 percent). The researchers also noted an 86 percent AUC for the multimodal AI model for diagnosing adult respiratory failure, insufficiency, and arrest in comparison to 76 percent for chest X-rays only and 82 percent for clinical parameters only.

“For both investigated data sets of radiographs with accompanying non-imaging data, we found a consistent increase in diagnostic performance when non-imaging clinical data were used a long with imaging data. … it is expected that multimodal models that can combine a vast array of modalities will be dominating the future artificial intelligence landscape,” wrote study co-author Daniel Truhn, M.D., M.Sc, who is affiliated with the Department of Diagnostic and Interventional Radiology at University Hospital Aachen in Aachen, Germany, and colleagues.

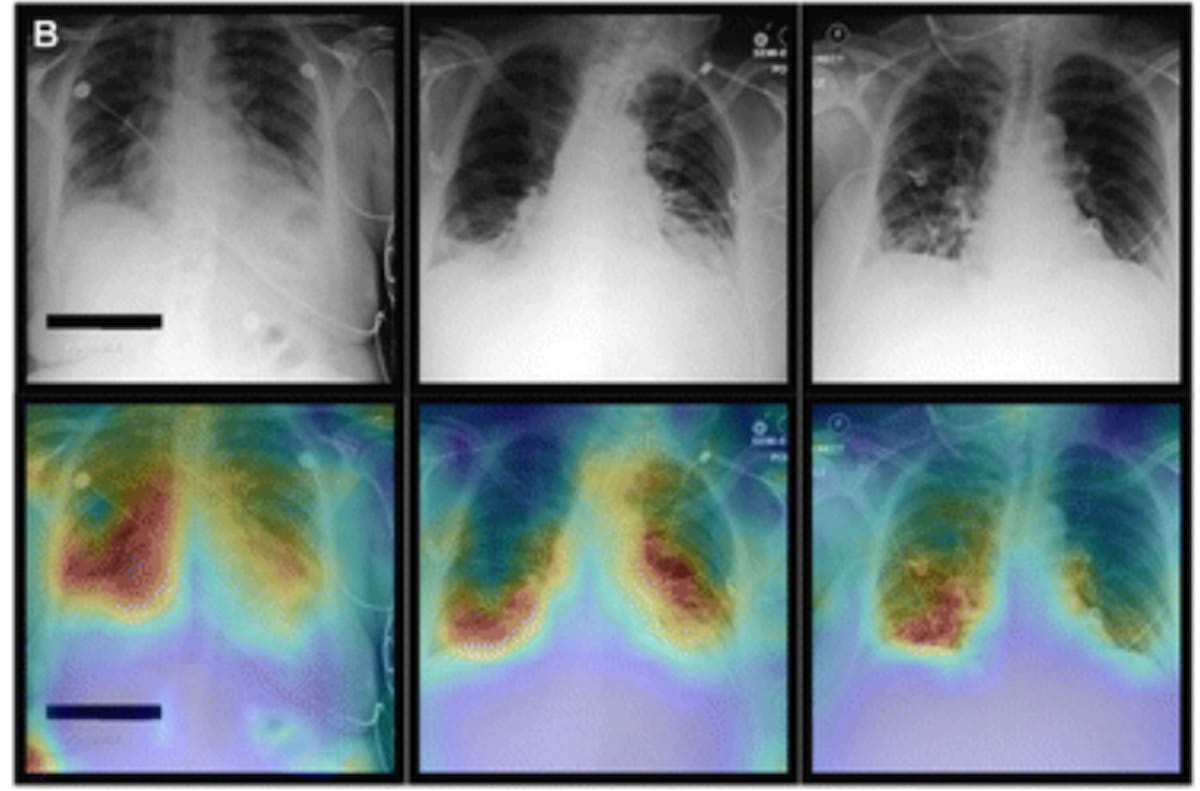

The study authors emphasized that attention maps generated by the multimodal transformer AI model provide the highest values in subregions of radiographs that may be more indicative of potential pathology.

Three Key Takeaways

- Improved diagnostic performance. The multimodal artificial intelligence (AI) model, which combines clinical data with chest X-ray findings, demonstrated superior diagnostic performance compared to using clinical parameters or chest X-rays alone for various conditions, including congestive heart failure, chronic kidney disease, hypertension, pneumonia, and more.

- Increased AUC for specific conditions. The multimodal AI model showed substantial improvements in the area under the receiver operating characteristic curve (AUC) for specific conditions. For instance, it had a 21 percent higher AUC than chest X-rays alone in diagnosing acute cerebrovascular disease and an 11 percent higher AUC than clinical parameters alone for congestive heart failure (non-hyperintensive).

- Potential for future AI applications. The study suggests that the integration of clinical and imaging data in multimodal AI models holds promise for enhancing diagnostic accuracy in various medical conditions. The authors anticipate that such models, capable of combining different data modalities, may play a significant role in the future of artificial intelligence in health care.

For some conditions, multimodal AI offered smaller increases in detection over unimodal use of chest radiographs or clinical parameters. Researchers noted a 75 percent AUC for multimodal AI in detecting gastrointestinal hemorrhage in comparison to 74 percent based on clinical parameters only. For chronic obstructive pulmonary disease and bronchiectasis, multimodal AI had a 76 percent AUC in contrast to 75 percent for chest X-ray findings alone.

“ … Diagnostic performance may not inevitably benefit from integrating both imaging and non-imaging data. For instance, diabetes is predominantly diagnosed without imaging, while pneumothorax diagnosis relies primarily upon imaging,” added Truhn and colleagues.

(Editor’s note: For related content, see “Study Raises Doubt About AI Sensitivity for Smaller and Multiple Findings on Chest X-Rays,” “FDA Clears AI-Powered Software for Lung Nodule Detection on X-Rays” and “Autonomous AI Shows Nearly 27 Percent Higher Sensitivity than Radiology Reports for abnormal Chest X-Rays.”)

In regard to study limitations, the authors acknowledged that it remains to be seen how well the transformer AI model would perform with three-dimensional imaging data as the study focused on the use of two-dimensional imaging data. The amount of data for training of the model’s architecture was curtailed by testing of the model’s neural network in a supervised learning context, according to the study authors.