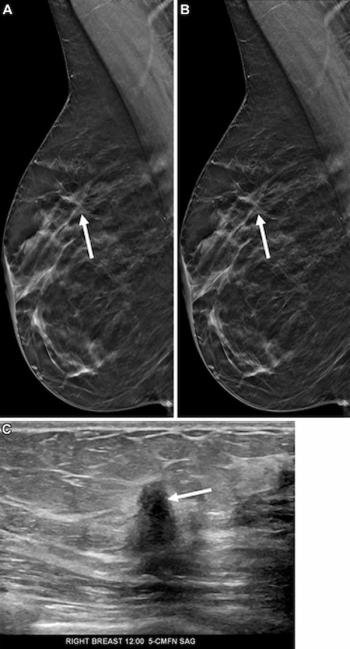

Can AI-Powered Mammography Screening be a Game Changer?

In a retrospective study involving mammography screening in over 114,000 women, researchers found that an artificial intelligence model had comparable specificity and sensitivity to radiologist screenings, reduced false positive results by 25 percent and reduced radiologist workload by more than 62 percent.

Emerging research suggests that artificial intelligence (AI) could have a significant role in breast cancer screening.

In a

Utilizing initial AI-only screening also would have prevented radiologists from reading 71,585 of the 114, 421 mammograms due to the exclusion of normal or suspicious findings, reducing the radiologist workload by over 62 percent, according to the study authors.

“ … (The) incorporation of an artificial intelligence (AI) system in population-based breast cancer screening programs could potentially improve screening outcomes and may considerably reduce the workload of radiologists,” wrote Martin Lillholm, Ph.D, a professor in the Department of Computer Science at the University of Copenhagen in Denmark, and colleagues.

In the main simulation study of AI-based screening, the researchers utilized the AI system Transpara (ScreenPoint Medical), which has received 501(k) clearance from the Food and Drug Administration (FDA) as well as the Conformite Europeenne (CE) mark of approval, to assess whether mammogram findings were normal, of moderate risk or suspicious.

In regard to assessing breast density with the Breast Imaging Reporting and Data System (BI-RADS), Lillholm and colleagues noted the AI screening had reduced sensitivity with increasing BI-RADS density in comparison to radiologist assessment and increased specificity across all BI-RADS densities. The study authors also noted that smaller sample sizes precluded establishment of noninferiority for the AI system regarding individual BI-RADS density groups.

Other limitations of the study, according to Lillholm and colleagues, included a potentially higher likelihood of radiologists diagnosing cancer in a subgroup of the main study population (after initial AI screening) as opposed to standard screening, They also noted that the data came from a single center assessing one AI modality, which may preclude the extrapolation of results to other institutions with different screening regimens and/or AI systems.